Why Demonstrated Risk Is Ignored

Why do people acknowledge evidence of harm and then proceed as if it doesn't exist? A deep dive into structural risk dismissal.

Listen while you read

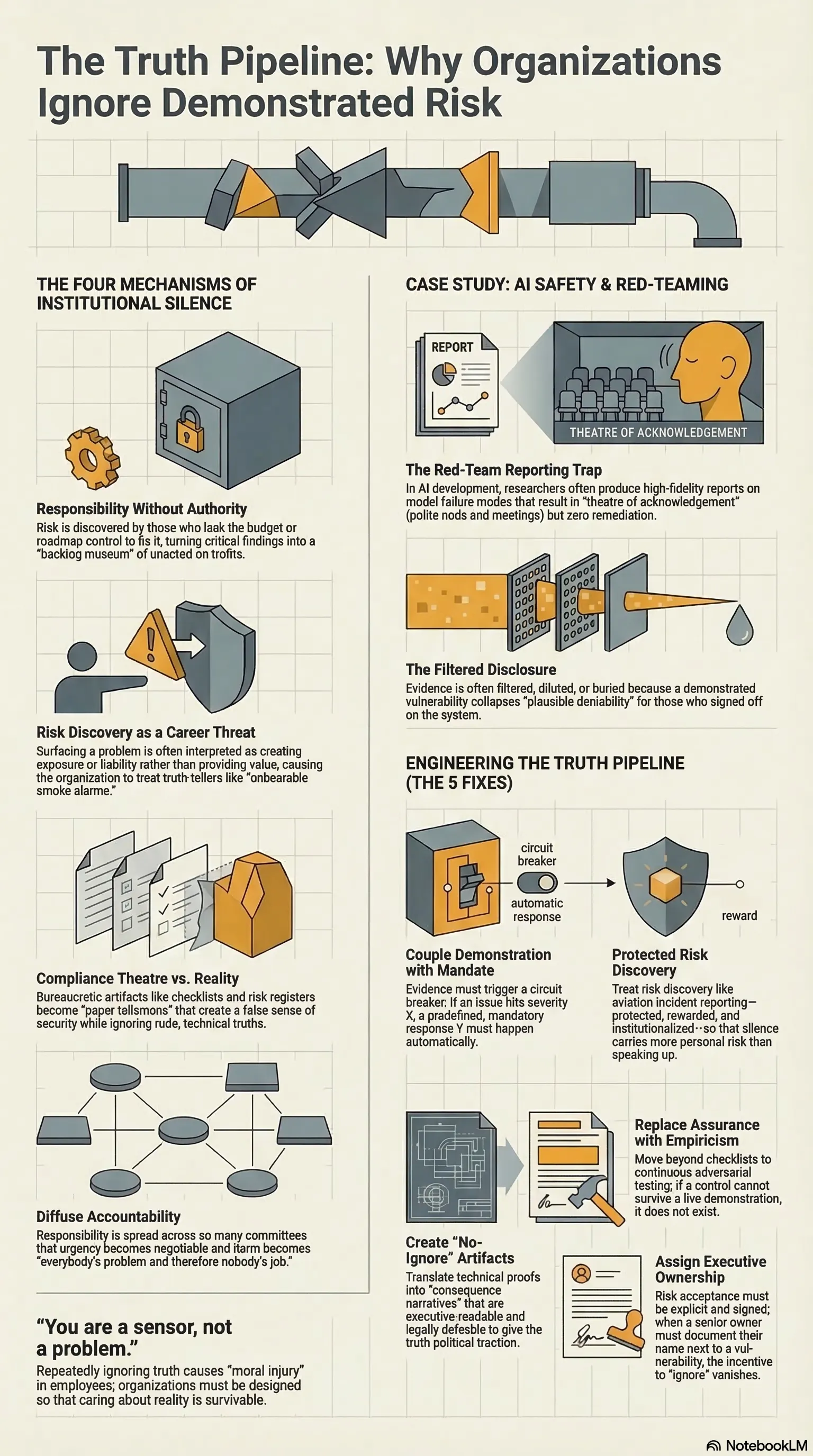

You show someone evidence of harm. They acknowledge the evidence. Then they proceed as if the evidence doesn’t exist. This isn’t stupidity or denial—it’s something more structural, and more dangerous.

The pattern appears across domains: financial systems that ignored early warning signs before 2008, pandemic preparedness plans that sat unexecuted despite clear indicators, AI systems deployed at scale despite documented failure modes. The evidence was available. The risk was demonstrated. The dismissal happened anyway.

Why Demonstrated Risk Is Ignored investigates the psychological and organisational mechanisms that make this pattern so reliable. It’s not about a lack of information. It’s about how competing incentives, social proof, narrative momentum, and temporal discounting combine to make demonstrated risk feel less real than optimistic projection—even when the projection has no evidential basis and the risk is sitting in front of you, documented and measured.

The research draws on case studies from financial regulation, public health, and technology deployment, with a particular focus on what these patterns mean for AI governance. Because we’re now building systems whose failure modes may not offer a second chance to update our priors.

The question the paper asks is not whether we can see the risks. We can. The question is why we keep looking past them—and what structural changes might make that harder to do.