The Legal AI Trust Deficit

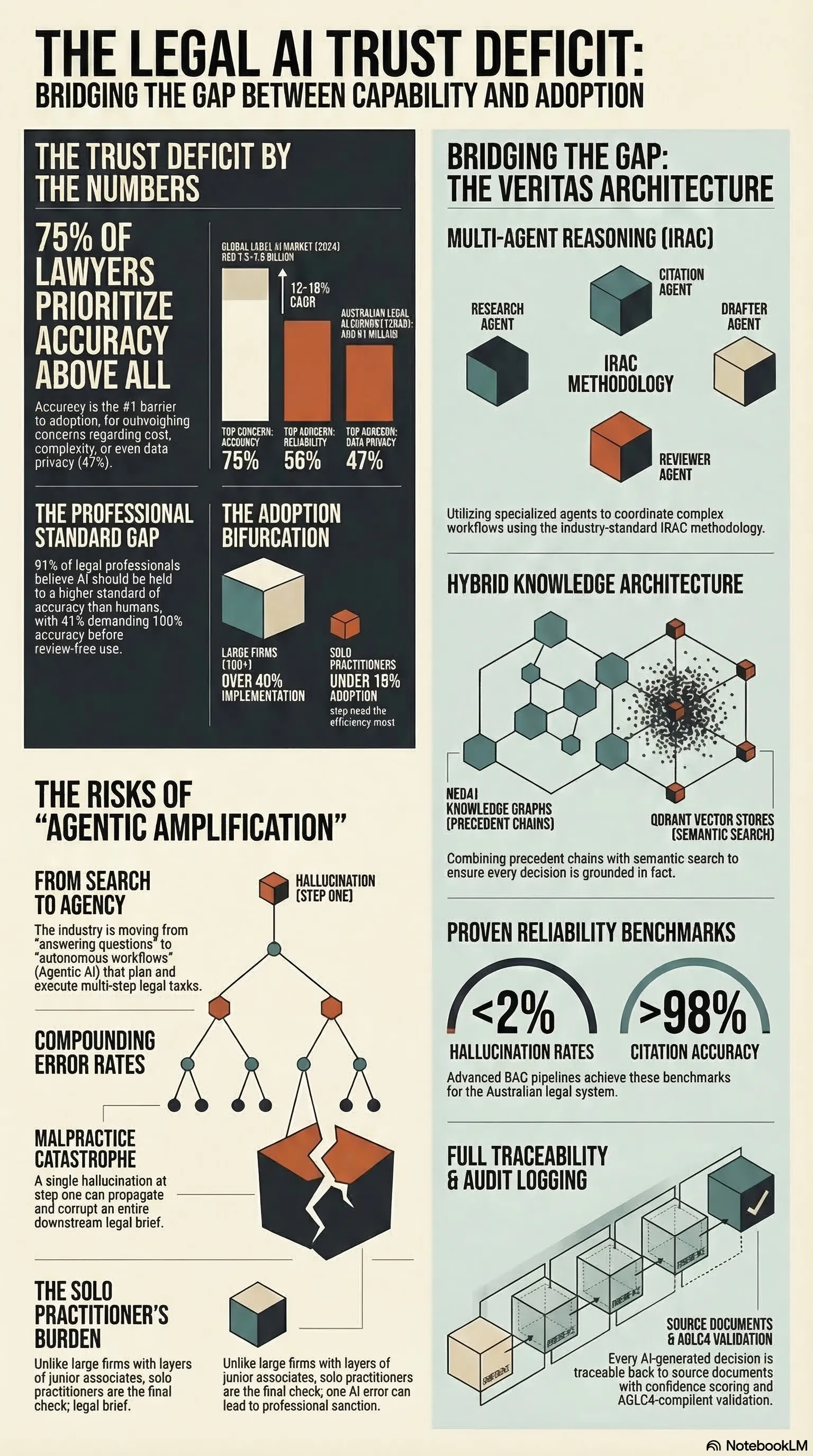

75% of lawyers cite accuracy as their top AI concern. The legal profession's core values are in direct tension with current AI capabilities.

Listen while you read

Three quarters of lawyers say accuracy is their top concern with AI tools. Not cost, not complexity, not privacy — accuracy. That single statistic tells you almost everything you need to know about why legal AI adoption looks nothing like AI adoption in other industries.

I’ve been researching the legal AI market as part of a broader project on verifiable AI systems, and the pattern that keeps emerging is what I’d call the efficiency-trust deficit: an enormous demand for automation colliding head-on with deep, structurally justified skepticism about whether any of these tools can be trusted with real legal work.

The Numbers Behind the Hesitation

The global legal AI market is growing rapidly — valuations around USD 1.5–1.9 billion in 2024, with projections of 13–18% compound annual growth over the next decade. In Australia specifically, the legal AI segment is projected to approach AUD 91 million by 2030. The demand signal is unmistakable.

But the adoption numbers tell a different story. Over 40% of large firms (100+ lawyers) have implemented or are seriously considering AI tools. For solo practitioners, that figure drops to under 18%. The gap isn’t about awareness or interest. It’s about trust.

When you survey legal professionals about their concerns, accuracy dominates at nearly 75%, followed by reliability at 56% and data privacy at 47%. These aren’t the objections of technophobes. They’re the rational concerns of professionals whose livelihoods depend on getting things right.

Why Legal Trust Is Different

In most industries, AI accuracy concerns are about efficiency — a wrong recommendation wastes time, a bad prediction costs money. In law, the stakes are categorically different.

A lawyer has a professional duty of competence. Every fact asserted, every case cited, every legal argument advanced carries the practitioner’s personal and professional guarantee. When an AI system fabricates a case citation — the now-infamous “hallucination” problem — it isn’t a minor software glitch. It’s a direct path to professional sanction, malpractice liability, and reputational destruction.

This is why the 91% of professionals who believe AI should be held to a higher standard of accuracy than humans aren’t being unreasonable. In the legal context, they’re being precise about what the technology needs to deliver before it can be safely integrated into professional workflows. And 41% demand 100% accuracy before they’d use AI output without human review — a standard that no current system meets.

The profession’s conservatism here isn’t a bug. It’s a feature of a system designed to protect clients from errors that can’t be undone.

The Solo Practitioner Problem

The trust deficit hits hardest where the need for efficiency is most acute. Solo and small-firm lawyers are drowning in administrative work. They spend their days balancing high-value legal analysis against a mountain of non-billable tasks — document review, preliminary research, drafting standard communications, practice management.

Large firms can absorb the risk of early AI adoption. They have layers of review — junior associates, paralegals, law librarians — that can validate AI-generated work before it reaches a client or a court. They can afford enterprise platforms at over USD 1,000 per user per month. They can treat AI as one input among many in a multi-layered quality assurance process.

A solo practitioner is the final check. There’s no junior associate to catch the hallucinated citation. There’s no budget for a platform that costs more than their office rent. And their reputation — their single most valuable professional asset — is on the line with every piece of work product.

This creates a painful irony: the practitioners who would benefit most from AI-driven efficiency are precisely the ones who can least afford the risk of trusting it.

The Australian Context

Australia’s legal AI landscape mirrors the global pattern with some local texture. The broader legal services market sits at roughly AUD 33–34 billion, and a growing cohort of Australian legal tech startups — several incubated through accelerators like the Lander & Rogers LawTech Hub — are tackling specific niches. You can find tools for automated due diligence searches across government registers, no-code platforms for building legal workflow agents, and affordable research assistants targeting Australian, UK, and New Zealand case law.

But the same bifurcation exists here. The global platforms (Thomson Reuters’ CoCounsel, LexisNexis’ Protege) are building expensive ecosystem plays designed for enterprise customers. The local startups are solving narrow problems well but aren’t addressing the fundamental trust architecture that the broader market demands. The solo practitioner in suburban Sydney or regional Queensland faces the same gap as their counterpart in Kansas City — acute need, inadequate options.

The Agentic Amplification Problem

The trust deficit is about to get worse before it gets better. The industry is moving toward agentic AI — systems that don’t just answer questions but autonomously plan, reason through, and execute multi-step workflows. Instead of asking an AI to summarise a case, you assign it an objective: analyse this complaint and prepare a draft motion to dismiss.

The potential productivity gains are enormous. The reliability risks are also enormous, and they compound. A hallucinated citation in a simple question-and-answer interaction is a correctable mistake. A misapplied legal rule embedded six steps deep in an autonomous workflow that drafts and files a legal brief is a potential malpractice catastrophe. Each step in an agentic chain that lacks rigorous verification is a point where errors can propagate and corrupt everything downstream.

The incumbents building these agentic systems — Thomson Reuters with guided workflows, Harvey AI with its workflow builder — are staking their futures on this paradigm. But none of them have solved the verification problem at the architectural level. They’re building more powerful systems on the same foundations that practitioners already don’t trust for simpler tasks.

What Would Actually Close the Gap

The market is telling us exactly what it needs, if we listen. It’s not more features. It’s not faster outputs. It’s not bigger language models. It’s provable reliability and transparent reasoning at a price point that solo and small-firm practitioners can justify.

That means systems where every assertion links back to a verifiable source. Where the reasoning chain is inspectable, not hidden inside a black box. Where the system communicates its limitations clearly rather than projecting false confidence. Where a practitioner can audit the AI’s work with the same rigour they’d apply to a junior associate’s draft.

The efficiency-trust deficit isn’t a temporary adoption hurdle that marketing and familiarity will solve. It reflects a genuine gap between what AI systems currently deliver and what the legal profession structurally requires. Closing it is an engineering problem, a design problem, and an institutional trust problem — all at once.

The firms and products that figure this out won’t just capture a market. They’ll set the standard for what trustworthy AI looks like in any high-stakes professional domain.

This analysis draws on market research conducted as part of the VERITAS project exploring verifiable AI for legal applications. The full competitive landscape analysis and strategic framework are in the technical report.