Why Demonstrated Risk Is Ignored

Large organisations rarely fail because risks are unknown. They fail because known risks are structurally difficult to act on.

Listen while you read

I spend most of my time trying to demonstrate that AI systems can be made to fail in dangerous ways. The technical work is hard but tractable. The part that keeps me up at night is what happens after you prove something is broken — because the answer, far more often than it should be, is nothing.

This isn’t unique to AI safety. It’s a pattern that recurs across every large organisation I’ve studied or worked inside. The gap between “we know this is a problem” and “we are doing something about it” is not an information gap. It’s a structural one.

The myth that evidence leads to action

There’s a comforting assumption baked into most risk frameworks: find the problem, present the evidence, and decision-makers will respond. It sounds reasonable. It’s also wrong.

In practice, there’s an intermediate step that rarely appears in process diagrams. Before anyone acts on evidence, they evaluate what accepting it will cost them personally and institutionally. Truth creates local costs — blame for past decisions, budget for rework, audit exposure, reputational damage, disruption to schedules that were already promised to someone important.

Where those costs aren’t explicitly managed, inaction becomes the rational choice. Not the ethical choice. Not the wise choice. But the locally rational one. And local rationality, compounded across an organisation, produces systemic failure.

Four structural reasons demonstrated risk gets buried

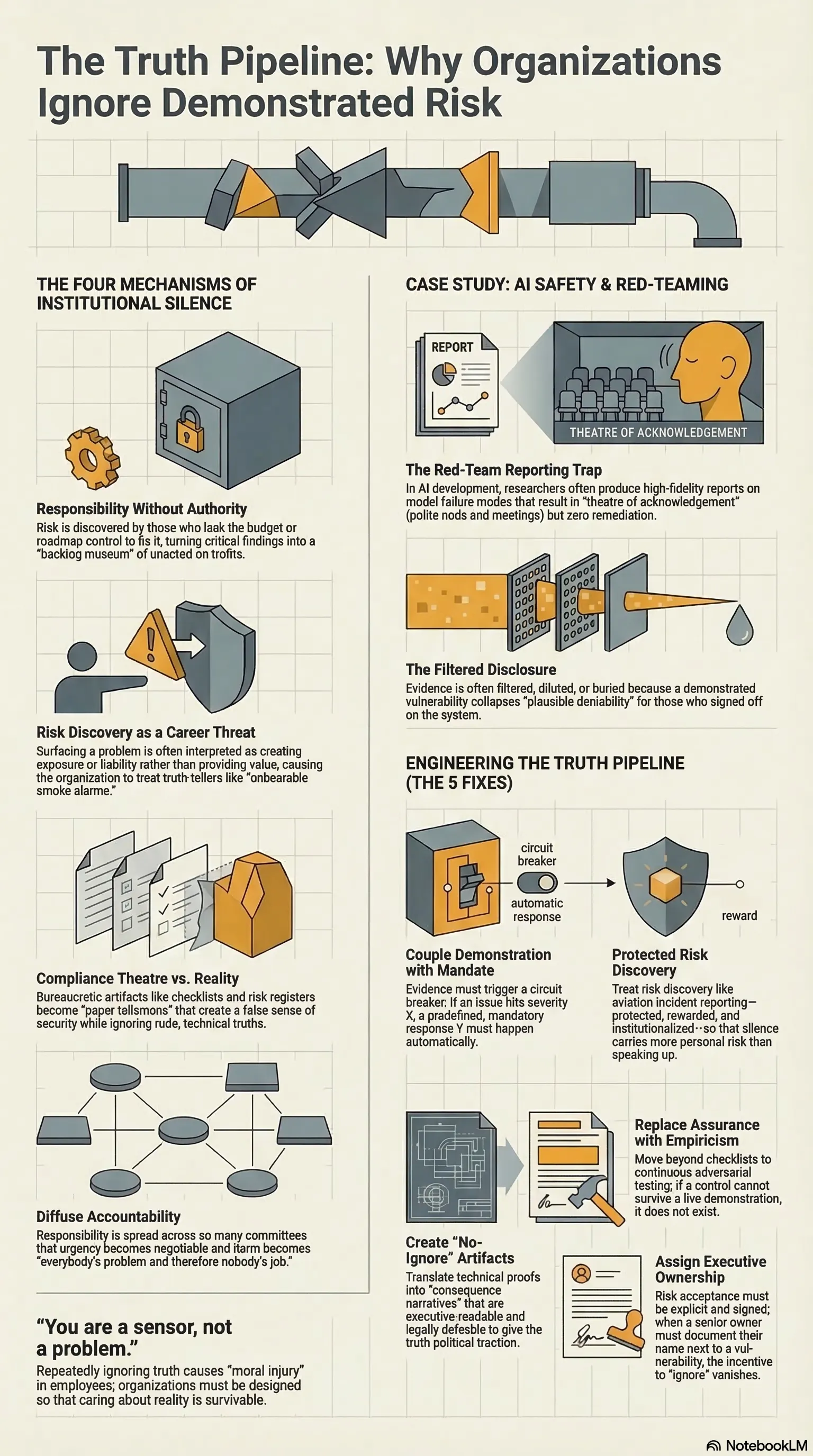

After studying this pattern across technology companies, government agencies, and research institutions, I’ve identified four mechanisms that reliably prevent demonstrated risk from reaching remediation. These aren’t personality flaws or cultural quirks. They’re structural features of how large organisations operate.

1. Responsibility without authority

The people who find risks are almost never the people who can fix them. A security researcher identifies a critical vulnerability but cannot allocate engineering time. A quality engineer flags a design flaw but cannot change the product roadmap. A junior analyst spots a compliance gap but cannot redirect budget.

The result is depressingly predictable. Findings become tickets. Tickets become backlog items. Backlogs become archives. The risk was “reported” — the organisation can point to the artefact — but nothing moved.

This is the most common failure mode I encounter in AI safety work. Red-team a system, produce a detailed report, hand it to a team that agrees it’s a problem but lacks the authority or resources to act on it. The report exists. The vulnerability persists.

2. Risk discovery as career threat

In many organisations, surfacing a problem is treated as creating exposure rather than providing value. The incentive structure is straightforward: if you find something bad, you become associated with something bad. The messenger doesn’t always get fired — sometimes they just stop getting invited to meetings.

When the system punishes truth-telling, the system learns to prefer ignorance. People stop looking. They stop reporting. They develop a finely tuned sense for which truths are safe to speak and which will cost them. This isn’t cowardice. It’s rational adaptation to a broken incentive structure.

I’ve watched this happen in AI labs. A researcher demonstrates a failure mode. The response isn’t “thank you for finding this” — it’s “why are you testing for that?” The implicit message is clear: the organisation would rather not know.

3. Compliance substituting for reality

Governance loves artefacts. Policies, checklists, risk registers, audit trails. These are important — but they can become a substitute for empirical validation rather than a complement to it.

The failure mode is subtle. An organisation can have a complete and well-maintained risk register that bears almost no relationship to its actual risk exposure. Every box is ticked. Every control is documented. And the system has never been tested against realistic failure scenarios.

In AI, this shows up as “model cards” and “safety evaluations” that describe what a system is supposed to do without adversarially testing what it actually does under pressure. The documentation looks complete. The safety is symbolic.

4. Diffuse accountability

In complex organisations, responsibility spreads across roles, committees, and reporting lines until no single person is structurally obligated to own the outcome. Everyone can see the problem. No one is required to move it.

This is different from ignorance. It’s collective awareness without individual accountability. The risk lives in the space between job descriptions, in the gaps between teams, in the overlap between committees that each believe the other one is handling it.

Why demonstration can actually reduce urgency

Here’s the counterintuitive part. You’d expect that demonstrating a risk — proving it’s real, not hypothetical — would increase urgency. Sometimes it does. But demonstration can also collapse plausible deniability.

Once a risk is undeniable, the organisation faces a harder question: if we act now, we’re admitting we knew and didn’t act before. Remediation implies that prior decisions were inadequate. If those decisions were made by people who are still in the room, the incentives can favour delay, quiet containment, or reframing the risk as acceptable.

I’ve seen this dynamic repeatedly in AI safety disclosures. A demonstrated vulnerability creates more institutional discomfort than a theoretical one — precisely because it’s harder to explain away. The response isn’t always “fix it faster.” Sometimes it’s “control the narrative.”

This isn’t primarily a culture problem

It’s tempting to frame all of this as a culture issue. “We need a culture of transparency.” “We need psychological safety.” These things matter, but culture is downstream of structure.

Psychological safety doesn’t emerge because a CEO gives a speech about openness. It emerges when the person who reports a problem gets resourced to fix it instead of blamed for finding it. Ethical action becomes reliable when responsibility aligns with authority and when risk discovery is protected rather than punished.

You can’t culture your way out of a structural trap.

Engineering systems where truth can move

The practical goal isn’t more reporting. Organisations already drown in reports. The goal is a working truth pipeline — a system where demonstrated risk can move from discovery to remediation without being filtered, diluted, or buried along the way.

What that looks like in practice:

- Clear remediation authority. The person or team that receives a risk finding must have budget, time, and decision rights to act on it. If they don’t, the escalation path must be explicit and time-bounded.

- Protected escalation. Reporting a risk cannot be a career-limiting move. This requires more than a policy — it requires visible examples of people being rewarded for it.

- Empirical validation alongside compliance. Checklists and registers are necessary but insufficient. Adversarial testing, tabletop exercises, and red-team reviews must have equal standing with documentation.

- Named ownership for outcomes. Not ownership of process — ownership of whether the risk was actually reduced. Someone’s name goes on the result, not just the report.

- Incentives that treat risk discovery as value creation. If you found the problem before it became a crisis, you saved the organisation. That should be recognised, not treated as an inconvenience.

The short version: make it safer to act than to ignore.

The moral cost of structural inaction

There’s a human dimension to this that organisational charts don’t capture. When people are required to observe and report reality but are structurally prevented from influencing outcomes, the result is a specific kind of burnout. Not exhaustion from overwork — exhaustion from futility.

In AI safety, this is endemic. Researchers who spend months demonstrating that a system is dangerous, only to watch the system ship anyway. The finding was acknowledged. The risk was accepted. The person who found it carries the knowledge that they did their job and it didn’t matter.

More quietly, repeated suppression of demonstrated truth damages the organisation’s ability to know itself. Each buried finding makes the next one easier to bury. The system’s model of its own risk diverges further from reality until the gap becomes a crisis.

The point

Demonstrated risk is ignored not because people are irrational, but because the systems they operate within make inaction locally rational. The fix isn’t better evidence or louder warnings. It’s redesigning the structures — authority, incentives, accountability — so that truth can move and remediation becomes the default rather than the exception.

Every organisation says it wants to know what’s broken. The test is whether it has built the machinery to act on the answer.

This essay is adapted from the Why Demonstrated Risk Is Ignored research project. The full paper, case studies, and structural analysis are available in the research repository.