The AI Productivity J-Curve: Why Most Enterprise AI Fails

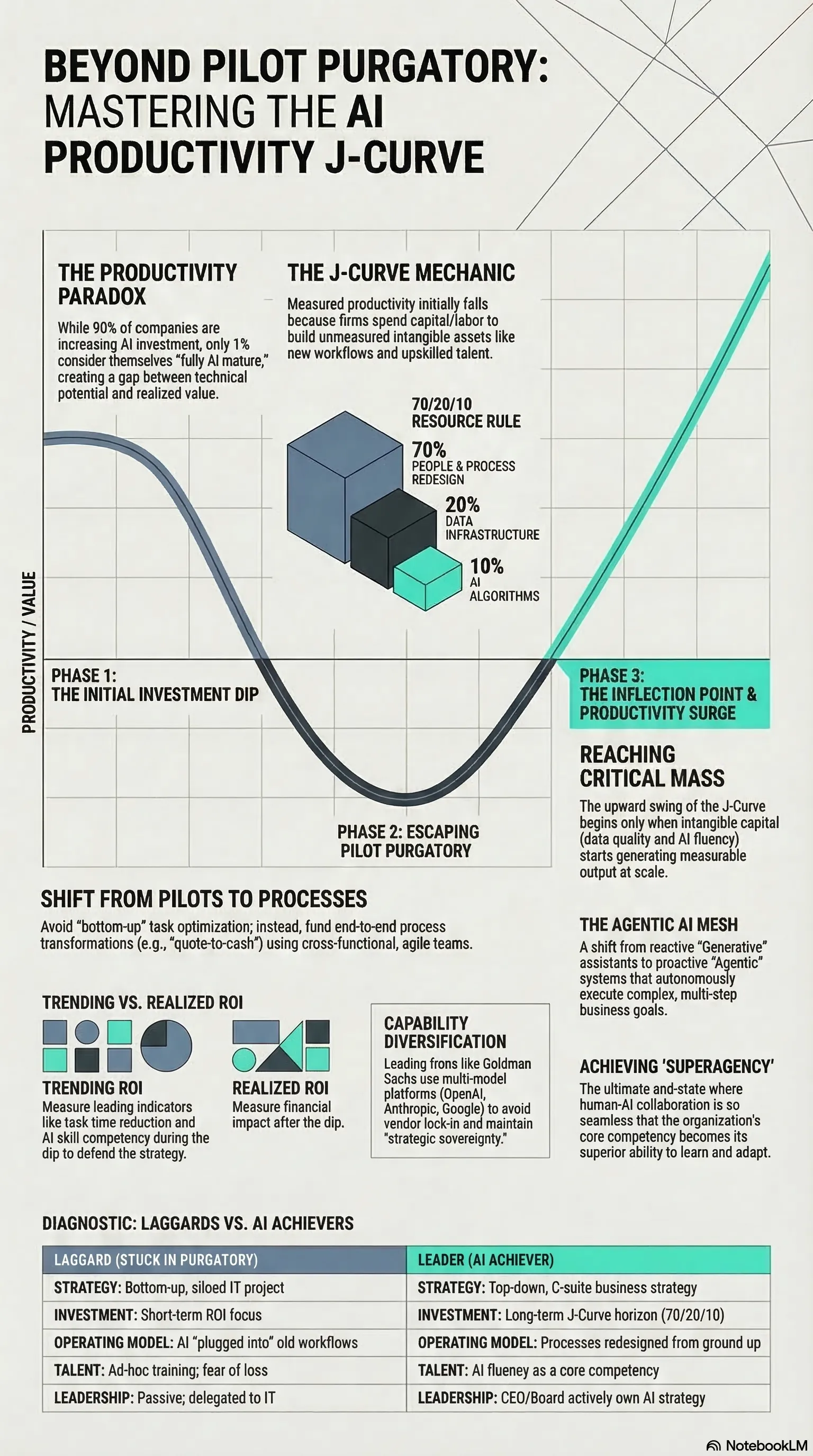

90% of companies plan to increase AI investment. Only 1% consider themselves AI-mature. The J-Curve explains why.

Listen while you read

Over 90% of companies plan to increase their AI investment. Hyperscale capex is on track to hit $405 billion. Every boardroom has an AI strategy slide deck.

And yet only 1% of organizations consider themselves fully AI-mature. Twelve percent have advanced enough to see meaningful business transformation. The rest are stuck — running pilots that never scale, funding experiments that never graduate to production.

This isn’t a technology problem. It’s an economic one. And there’s a framework that explains exactly what’s happening.

The Productivity Paradox, Again

We’ve seen this movie before. In 1987, Robert Solow observed that you could see the computer age everywhere except in the productivity statistics. The same paradox is playing out with AI: extraordinary technical capability coexisting with stubbornly flat productivity numbers.

The instinctive reaction is to blame the technology, or the teams deploying it, or the data. But the pattern is too consistent for that. Three-quarters of organizations are using AI in at least one function. The technology works. What’s failing is something more structural.

Enter the J-Curve

Economists Erik Brynjolfsson, Daniel Rock, and Chad Syverson developed a framework that makes the paradox legible. They call it the Productivity J-Curve, and it applies to every general-purpose technology — steam, electricity, computing, and now AI.

The core insight: when organizations adopt a transformative technology, measured productivity doesn’t just stagnate. It initially drops. Then, after a period of painful investment, it rises — often dramatically. Plot it over time and you get a J-shaped curve.

Why the dip? Because adopting a general-purpose technology requires two kinds of investment. The first is visible: hardware, software, licenses — the stuff that shows up on balance sheets. The second is invisible, and it’s where most of the actual value creation happens:

- Redesigned business processes. You can’t bolt AI onto a workflow designed for manual execution and expect transformation.

- Data governance. Models need clean, accessible, trustworthy data. Most enterprises have none of the above.

- Workforce reskilling. People need to learn not just how to use new tools, but how to work differently.

- New business models. The real gains come from doing things that were previously impossible, not from doing existing things slightly faster.

During the early phase, organizations spend real, measurable resources — capital, time, attention — building these intangible assets. But because the assets themselves aren’t captured in productivity metrics, the numbers look worse. You’re investing heavily and your dashboard says you’re going backward.

The upswing only begins when the intangible capital reaches critical mass and starts generating measurable output. At that point, productivity appears to surge — sometimes deceptively fast — because the hidden investments are finally paying off.

Pilot Purgatory Is the J-Curve in Disguise

This framework reframes the most common failure mode in enterprise AI. “Pilot purgatory” — the state where AI experiments succeed in isolation but never scale — isn’t a mysterious organizational disease. It’s the predictable consequence of applying the wrong evaluation model to a J-Curve technology.

Here’s the mechanism. A team launches a promising AI pilot. It works technically. But it’s operating in the trough of the J-Curve: the organization hasn’t yet built the intangible capital (process redesign, data infrastructure, workforce capability) needed for the pilot to deliver enterprise-scale returns. When leadership applies a standard short-term ROI model, the numbers don’t justify scaling. Funding gets cut. The pilot dies.

Multiply this across dozens of initiatives and you get an organization that’s perpetually experimenting, perpetually disappointed, and perpetually stuck.

The critical insight is that this isn’t a failure of the pilots. It’s a failure of the measurement framework. You’re evaluating a multi-year infrastructure investment with a quarterly returns model. Of course it looks like it’s not working.

The Intangible Capital Deficit

Once you see the J-Curve, the diagnosis shifts. The problem isn’t that enterprises need to buy more AI. It’s that they’re chronically underinvesting in the complementary intangible capital that makes AI productive.

BCG’s research quantifies this nicely with a 70/20/10 heuristic: in successful AI transformations, 70% of effort goes to people and process redesign, 20% to data and infrastructure, and 10% to the algorithms themselves. For every dollar spent on a model, seven dollars need to go into transforming the organization around it.

Most enterprises have this ratio inverted. They over-index on technology procurement and under-index on everything else. Then they wonder why the technology isn’t delivering.

The Bottom-Up Experiment Trap

There’s a subtler failure mode worth calling out. The conventional wisdom in enterprise innovation is to run a portfolio of small, bottom-up experiments — fund lots of pilots, see what sticks, scale the winners. This feels prudent. It’s also the direct cause of pilot purgatory.

The problem is that isolated pilots, by design, can’t generate the systemic changes needed for transformative ROI. An AI tool that optimizes one task inside one department produces localized efficiency gains that are too small to justify the enterprise-wide investment in data infrastructure, process redesign, and workforce development that scaling requires.

The strategic unit of analysis needs to shift from the pilot to the business process. Instead of funding fifty disconnected experiments, identify a critical end-to-end workflow — customer onboarding, product development, order-to-cash — and charter a cross-functional team to transform it comprehensively. That’s how you build the intangible capital that gets you through the J-Curve trough and out the other side.

What This Means in Practice

The J-Curve framework doesn’t tell you AI will definitely pay off. It tells you that if it’s going to pay off, the path runs through a valley of reduced measured performance, and that the valley is the investment — not a sign the investment has failed.

For leadership, the implications are concrete:

Expect the dip. If your AI investments aren’t showing returns yet, that’s consistent with the J-Curve, not evidence against continuing. The question isn’t whether returns are visible, but whether you’re building the intangible capital that produces them.

Measure what matters in the trough. During the early phase, track leading indicators: adoption rates, employee time freed for strategic work, process cycle time reduction, data quality improvements. These are evidence that the intangible capital is accumulating. Financial returns are lagging indicators — they’ll come, but demanding them too early kills the investment.

Fund the rewiring, not just the tools. The technology is the easy part. The hard part — and the part that actually creates value — is redesigning processes, reskilling people, and building data infrastructure. Budget accordingly.

Think in processes, not pilots. Stop funding isolated experiments. Start funding end-to-end process transformations with cross-functional teams and multi-year horizons.

The 1% of organizations that have reached AI maturity didn’t get there by running better pilots. They got there by recognizing that AI is a general-purpose technology that requires a fundamentally different investment model — one that accounts for the J-Curve and funds the invisible work that makes the visible technology productive.

This post draws on research from the Orchestrix strategic research project. The full analysis, including maturity frameworks and implementation blueprints, is in the technical report.